Submitted to KDD 2026 (32nd ACM SIGKDD Conference on Knowledge Discovery and Data Mining), Jeju, Korea.

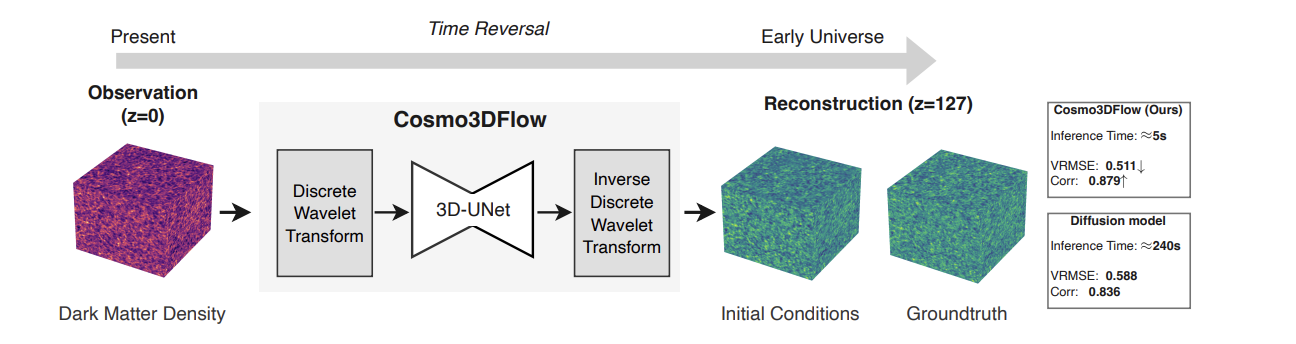

Reconstructing the early Universe from the evolved present-day Universe is a challenging and computationally demanding problem in modern astrophysics. We devise a novel generative framework, Cosmo3DFlow, designed to address dimensionality and sparsity, the critical bottlenecks inherent in current state-of-the-art methods for cosmological inference. By integrating 3D Discrete Wavelet Transform (DWT) flow matching to effectively represent high-dimensional cosmological data, and Temporal Homology (TH) to decouple high-frequency details from low-frequency structures through spatial compression, and wavelet-space velocity fields facilitate stable ordinary differential equation (ODE) solvers with large step sizes. Using 3D N-body cosmological simulations, at T=128, we achieve 10x faster sampling than diffusion models, combining a 5x reduction in integration steps with lower per-step computational cost from wavelet compression. Our results enable initial conditions to be sampled in seconds, compared to minutes for previous methods.

Accepted to the IEEE International Workshop on Large Language Models for Finance., The IEEE International Conference on Big Data (IEEE BigData 2024), Washington DC, USA. 2024 December 15-18

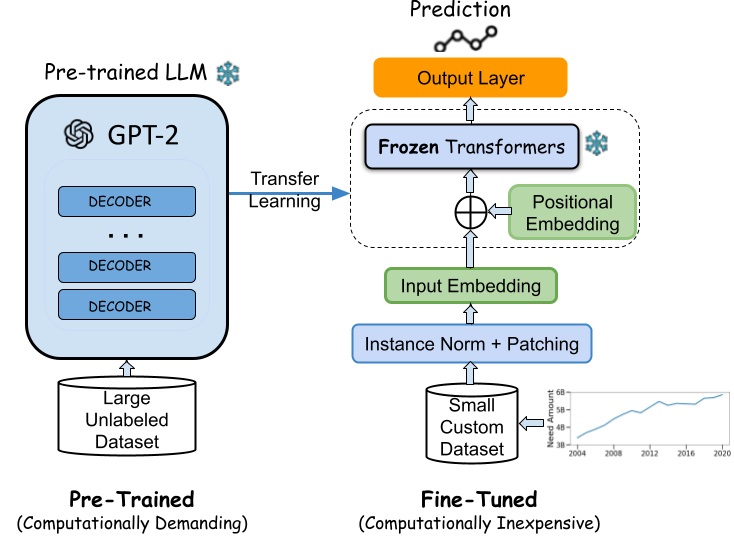

Considering the difficulty of financial time series forecasting in financial aid, one of the current main challenges is integrating big data analytics to financial services. One modern approach is to utilize "predictive analysis", analogous to forecasting financial trends. However, many of these time series models face various issues, for example, challenges posed by limited historical data and high generational financial interpretation, hinder the development of effective predictive models that balance accuracy with efficient runtime and memory usage. Pre-trained foundation models are envisioned to address these challenging tasks. We use state-of-the-art time series models pre-trained LLMs (GPT-2 as the backbone), transformers, and linear models to demonstrate their ability to outperform traditional approaches, even with minimal ("few-shot") or no fine-tuning ("zero-shot"). Our benchmark study, which includes financial aid with seven other time series tasks, shows the potential of using LLMs for scarce financial datasets.

Accepted to the AAAI-24 Doctoral Consortium, The 38th Annual AAAI Conference on Artificial Intelligence (AAAI-24), Vancouver, Canada. 2024 February 27.

The widespread use of Artificial Intelligence (AI) has highlighted the importance of understanding AI model behavior. This understanding is crucial for practical decision-making, assessing model reliability, and ensuring trustworthiness. Interpreting time series forecasting models faces unique challenges compared to other AI models, primarily due to two fundamental dependencies between time steps and the evolving importance of input features over time. My thesis focuses on addressing these challenges by aiming for more precise explanations of feature interactions, uncovering spatiotemporal patterns, and demonstrating the practical applicability of these interpretability techniques using real-world datasets and state-of-the-art deep learning models.

Accepted to the AI for Time-Series workshop. The 38th Annual AAAI Conference on Artificial Intelligence (AAAI-24), Vancouver, Canada. 2024 February 27.

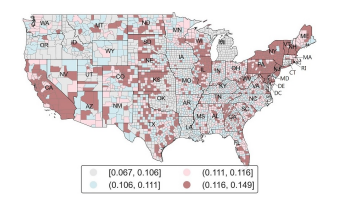

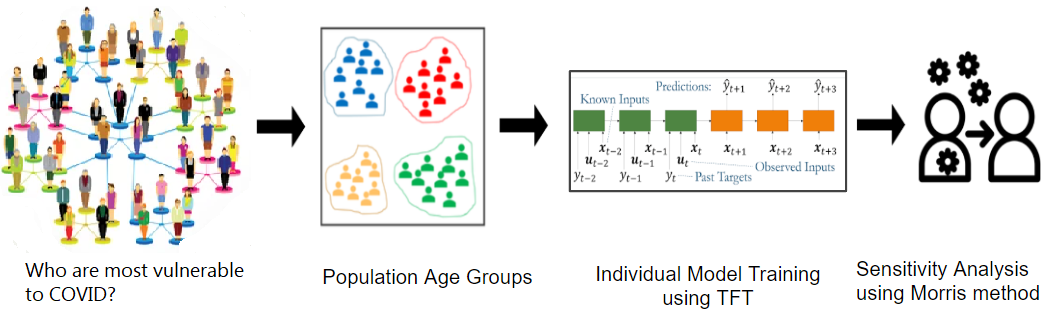

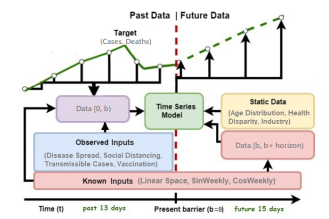

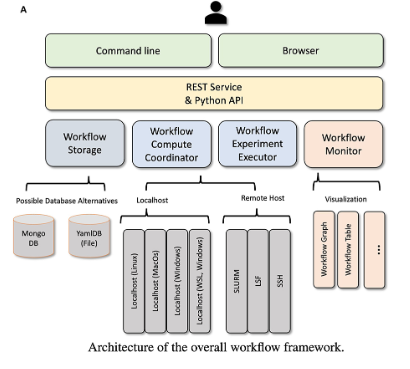

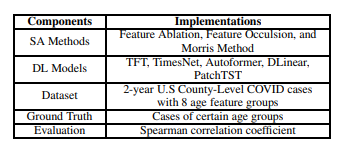

Interpreting deep learning time series models is crucial in understanding the model's behavior and learning patterns from raw data for real-time decision-making. However, the complexity inherent in transformer-based time series models poses challenges in explaining the impact of individual features on predictions. In this study, we leverage recent local interpretation methods to interpret state-of-the-art time series models. To use real-world datasets, we collected three years of daily case data for 3,142 US counties. Firstly, we compare six transformer-based models and choose the best prediction model for COVID-19 infection. Using 13 input features from the last two weeks, we can predict the cases for the next two weeks. Secondly, we present an innovative way to evaluate the prediction sensitivity to 8 population age groups over highly dynamic multivariate infection data. Thirdly, we compare our proposed perturbation-based interpretation method with related work, including a total of eight local interpretation methods. Finally, we apply our framework to traffic and electricity datasets, demonstrating that our approach is generic and can be applied to other time-series domains.

Proceedings of The IEEE International Conference on Digital Health (ICDH). This work won the NSF Student Research Competition Award (3rd Place Prize) July 2023. 2023 July 02; Volume 1 (Issue 1)

Deep Learning for Time-series plays a key role in AI for healthcare. To predict the progress of infectious disease outbreaks and demonstrate clear population-level impact, more granular analyses are urgently needed that control for important and potentially confounding county-level socioeconomic and health factors. We forecast US county-level COVID-19 infections using the Temporal Fusion Transformer (TFT). We focus on heterogeneous time-series deep learning model prediction while interpreting the complex spatiotemporal features learned from the data. The significance of the work is grounded in a real-world COVID-19 infection prediction with highly non-stationary, finely granular, and heterogeneous data.

Journal of Frontiers in High-Performance Computing. Located in the NSF Public Access Repository. 2023 October 23

MLCommons is an effort to develop and improve the artificial intelligence (AI) ecosystem through benchmarks, public data sets, and research. It consists of members from start-ups, leading companies, academics, and non-profits from around the world. The goal is to make machine learning better for everyone. In order to increase participation by others, educational institutions provide valuable opportunities for engagement. In this article, we identify numerous insights obtained from different viewpoints as part of efforts to utilize high-performance computing (HPC) big data systems in existing education while developing and conducting science benchmarks for earthquake prediction

Accepted to the AAAI-24 Undergraduate Consortium. The 38th Annual AAAI Conference on Artificial Intelligence (AAAI-24), Vancouver, Canada. 2024 February 27.

This work undertakes studies to evaluate Interpretability Methods for Time-Series Deep Learning. Sensitivity analysis assesses how input changes affect the output, constituting a key component of interpretation. Among the post-hoc interpretation methods such as back-propagation, perturbation, and approximation, my work will investigate perturbation-based sensitivity Analysis methods on modern Transformer models to benchmark their performances. Specifically, my work answers three research questions: 1) Do different sensitivity analysis (SA) methods yield comparable outputs and attribute importance rankings? 2) Using the same sensitivity analysis method, do different Deep Learning (DL) models impact the output of the sensitivity analysis? 3) How well do the results from sensitivity analysis methods align with the ground truth?